This page offers a collection of skills assessment tools and surveys for help in analyzing individual skills, profiling the skills of teams (groups of presenters), or for rating speakers based on audience evaluations (collective responses).

-

Individual Skills Assessment – Single Observer- 21 Skill Categories

-

Audience Evaluation – Multiple Observers- 5 Grouped Skill Categories

Skills Assessment Evaluation Tool

From published research, an assessment tool has been created to allow individuals to self-assess; or, to give managers a chance to assess others. Sometimes, external videos are submitted and the assessment is done by an expert. This service is called Digital Coaching.

TRY IT! Evaluate yourself or someone else using our FREE Skills Assessment.

Just follow the link, fill-in the form and the results will be e-mailed back to you!

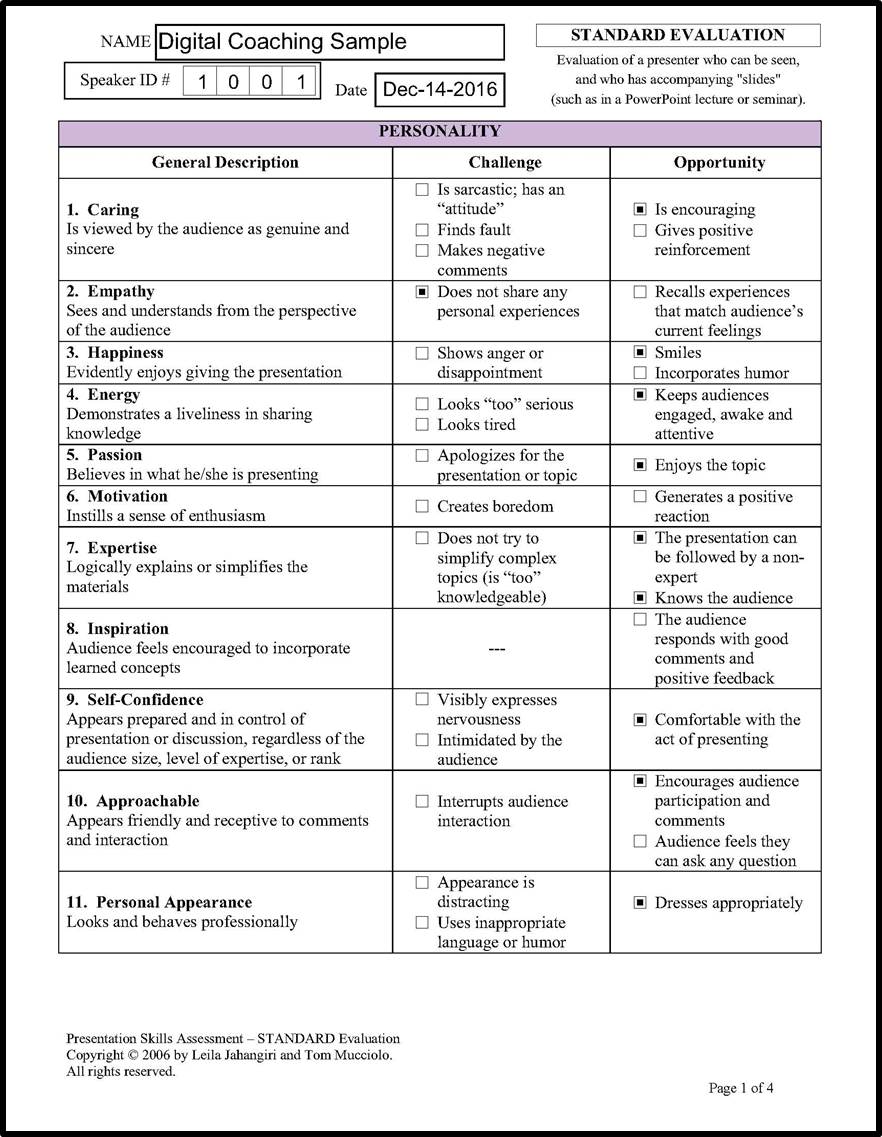

Below is a SAMPLE EVALUATION FORM (4 pages in all), from a Digital Coaching session, indicating selected observations of challenges and opportunities across 21 skill categories.

The 21 skills are grouped into core categories of PERSONALITY, PROCESS and PERFORMANCE, followed by RESULTS page which calculates the effectiveness of the speaker.

The 11 PERSONALITY categories

Page 1, above, covers the PERSONALITY elements of a presenter. Challenges in this section can be overcome by actions related to the other two areas of PROCESS and PERFORMANCE.

The 5 PROCESS categories

Page 2, above, covers the five PROCESS related areas, focusing on the skills needed to convey the message. These elements can help develop some of the personality factors. For example, if a presenter is challenged with expertise (Skill 7 on page 1), where information appears too complex, then the opportunities in Skills 12, 13 and 14 can help “simplify” the content by reducing text on visuals, or by adding stories, examples and analogies to the presentation.

If this form is used by a presentations skills coach, there is an area on this page available for specific comments and suggestions for improvement, as shown in the above sample.

The 5 PERFORMANCE categories

Page 3, above, shows the PERFORMANCE related skills. Many of these skills are clearly observed by the audience, and, for that reason, effective presentation training targets the areas of body language, speaking style and interaction. The skills in this area directly affect the personality elements, such as self confidence, empathy, caring, energy, and approachability.

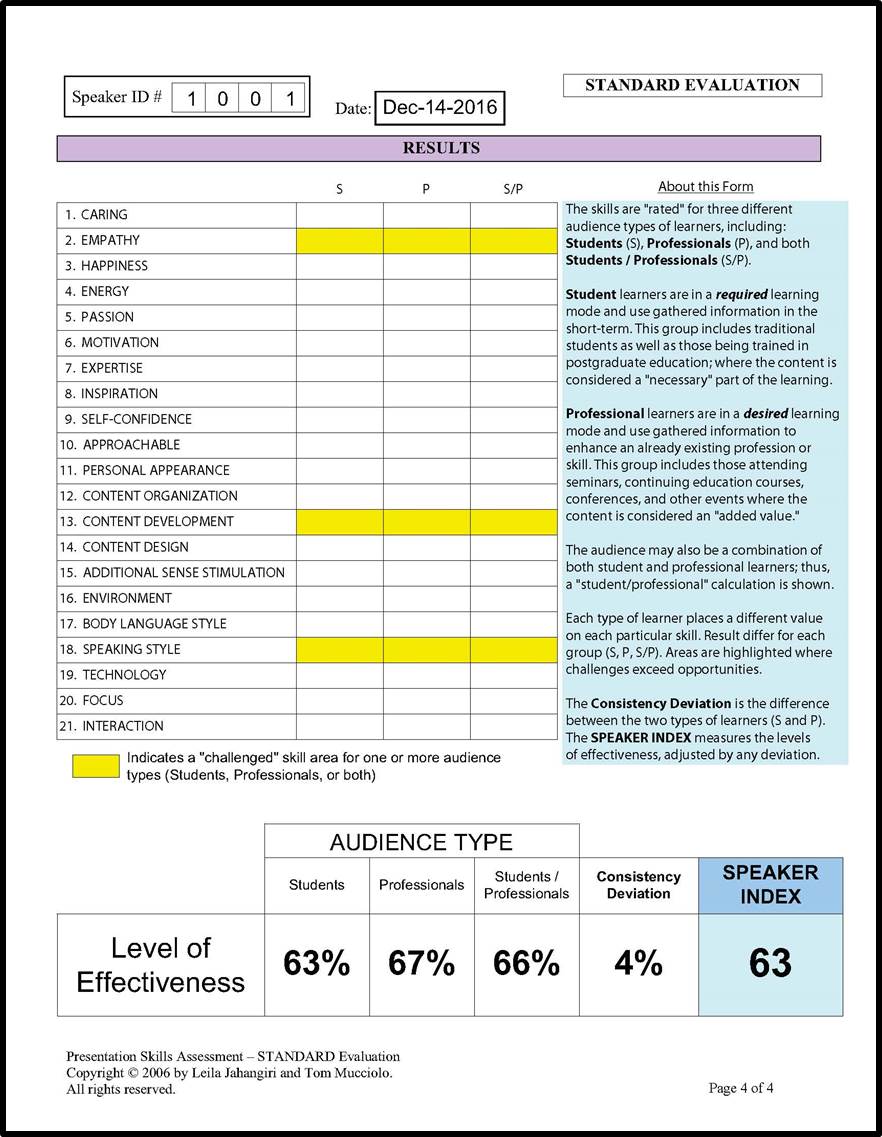

Shows ratings for different audience types

Page 4, above, highlights (in yellow) areas of concern where challenges exceed opportunities. Ratings for different audiences are shown, along with a SPEAKER INDEX.

The sample “Digital Coaching” subject above depicts a speaker who has several “challenges” to overcome and many opportunities still to take. The good news is that there is a reasonable degree of consistency (4%) across audience types. Based on this evaluation, the speaker is using skills generally applicable to both “student” and “professional” learners; and, will be effective in front of groups seeking general concepts, or those needing to learn a process. In comparative terms, this speaker could either conduct a training session or deliver a marketing presentation with similar consistency.

The overall speaker index indicates there some additional skills are needed to maximize the potential. For development purposes, a realistic target for this speaker would be an index of about 75, with no more than a 3% deviation, representing about a 20% increase in effectiveness.

HIGHLY effective speakers usually have a Speaker Index of 80 or higher, with a deviation of no more than 2%. These are speakers who have very few challenges and take advantage of most opportunities, with little or no deviation across audience types.

In general, a Speaker Index of 70 and a deviation of no more than 5% would be considered “effective” for most business presentations.

A Note about Audience Types

From a learning perspective, the research identifies diverse audience types described as: STUDENTS and PROFESSIONALS. When comparing groups of students with groups of professionals, the findings indicate that each group places significance differently on each skill category. The above assessment accounts for these different perspectives and separates audiences into three groups of learners: Students, Professionals and a combination of both.

A STUDENT learner is defined as one who requires knowledge in order to participate in a desired activity. This group not only includes traditional “students”, but it is extended to also include residents, trainees, and anyone who needs to immediately apply learned concepts. So the term “student” is used in this assessment.

A professional is also a “learner” but in a slightly different sense.

A PROFESSIONAL learner is defined as one who desires knowledge in order to add to an existing activity. Professionals include those who incorporate learned concepts over a period of time. Like students, they are learning, but they are using the knowledge to augment an existing practice or job function over a longer period.

In many cases, an audience can be a mix of both student learners and professional learners, and this combination is basically an “average” of the two groups.

SPICE Model – Audience Evaluation Tool

Audience feedback is helpful to a speaker only if the results can be used for improvement. Just knowing that you are a 3 out of 5, does not tell you where you need to improve to become a 4. The only thing you do know is that you need to get better. But how? The SPICE Model is a research-supported audience evaluation that measures specific skill areas, which can then be targeted for improvement

Using a five-point scale, from a low of 1 to high of 5, an audience member uses the SPICE Model (or similar format) to rate the observed skills of a speaker in each of FIVE distinct categories: Speech, Presence, Interaction, Clarity, and Expertise (SPICE). Below is an example of how evaluation questions may be worded to cover these categories.

SPICE MODEL excerpt taken from a typical audience evaluation

For those who already use audience evaluations, many of these categories are likely being assessed, although the wording may be different. Therefore, existing evaluation data from standard assessments is very easy to map to the SPICE Model to measure skills. In some cases, simply adding one more item to an existing evaluation would cover all of the categories.

Even though you could measure the effectiveness of a speaker on each individual evaluation, most events treat the performance as a collective, where all evaluations are grouped together into a single assessment.

The SPICE Model “COLLECTOR”

To assist organizations with measuring gathered responses, we designed the SPICE Model Collector. Using a special input form, audience feedback can be aggregated and analyzed to provide a measurement of a speaker’s effectiveness, across different audience types, arriving at an overall SPEAKER INDEX.

The results allow a speaker to see collective ratings in five areas to help identify specific strategies for improvement at subsequent events. The measurements are equally useful to event coordinators or departmental managers who wish to see how different speakers performed, not only in relation to one another, but across multiple sessions, in order to optimize speakers for future engagements. Shown below is an analysis of 25 audience evaluations for a sample speaker.

Sample SPICE COLLECTOR measuring audience evaluations for a speaker

The SPICE Model Collector allows input for up to 1,000 evaluations for a given assessment and the form automatically analyzes the audience (learner) responses, showing the average score in each category, along with a summary of total responses. All results are tabulated according to peer-reviewed published data on audience preferences. The fact that results are based on research data reduces bias and subjectivity when calculating levels of effectiveness.

The ratings are derived from preferences of student and professional learners, each of whom listens differently, as well as for those of a combined audience. To identify consistency across audience types, a deviation factor is shown. A lower number indicates a more consistent and therefore more versatile speaker, among varied audiences. The combination of all these measurements is expressed as an overall SPEAKER INDEX.

Think of the index as a barometer for gauging how much of a speaker’s potential talent is being observed by the evaluating audience. Another way to look at the index is as the measurable impression made by the speaker on the group.

SPICE Model Accuracy

Our complete Skills Assessment Evaluation Tool (described above) measures 80 independent characteristics across 21 unique skill categories. Usually this type of assessment is done by one individual while observing a speaker. But audience evaluations must be able to be completed quickly. Thus, the most preferred characteristics (90% of the complete skills) have been grouped into the five categories of the SPICE Model. As a result, we raise the threshold of effectiveness when judging a speaker’s SPICE Model rating, as compared to the individual assessment.

Using the complete assessment tool, an effective speaker is one who has an index greater than 70, with a consistency deviation of 5% or less. However, when using the SPICE Model we adjust for average audience responses (which tend to be higher), and we look for an index approaching 80 and a deviation of 3% or less.

Organizational Applications

How do the effectiveness levels help those who manage speakers? An event coordinator may notice that some speakers are rated higher for audiences of student learners, such as those attending workshops or hands-on training sessions. The same coordinator may notice that other speakers are rated much higher for professional audiences, such as those attending conceptual or keynote sessions where content is more strategic, persuasive, or theoretical. Knowing which speakers are better for specific groups makes event planning more efficient.

At future events it may be best to assign speakers according to the venue that are best suited, be that a training session or keynote address. Of course, speakers should use these ratings to build skills in deficient areas in order to offer greater utility to event coordinators and managers who are trying to maximize speaking assignments.

The SPICE Model Collector is used by organizations and institutions that distribute audience evaluations and collate the responses into databases and CRM systems. Conference coordinators, meeting & convention planners, membership-based organizations, and other public forums are revamping existing audience evaluations to include the five categories, to help identify the more effective speakers for future events.